- #Install spark on windows jupyter notebook install

- #Install spark on windows jupyter notebook update

- #Install spark on windows jupyter notebook full

- #Install spark on windows jupyter notebook download

- #Install spark on windows jupyter notebook windows

#Install spark on windows jupyter notebook windows

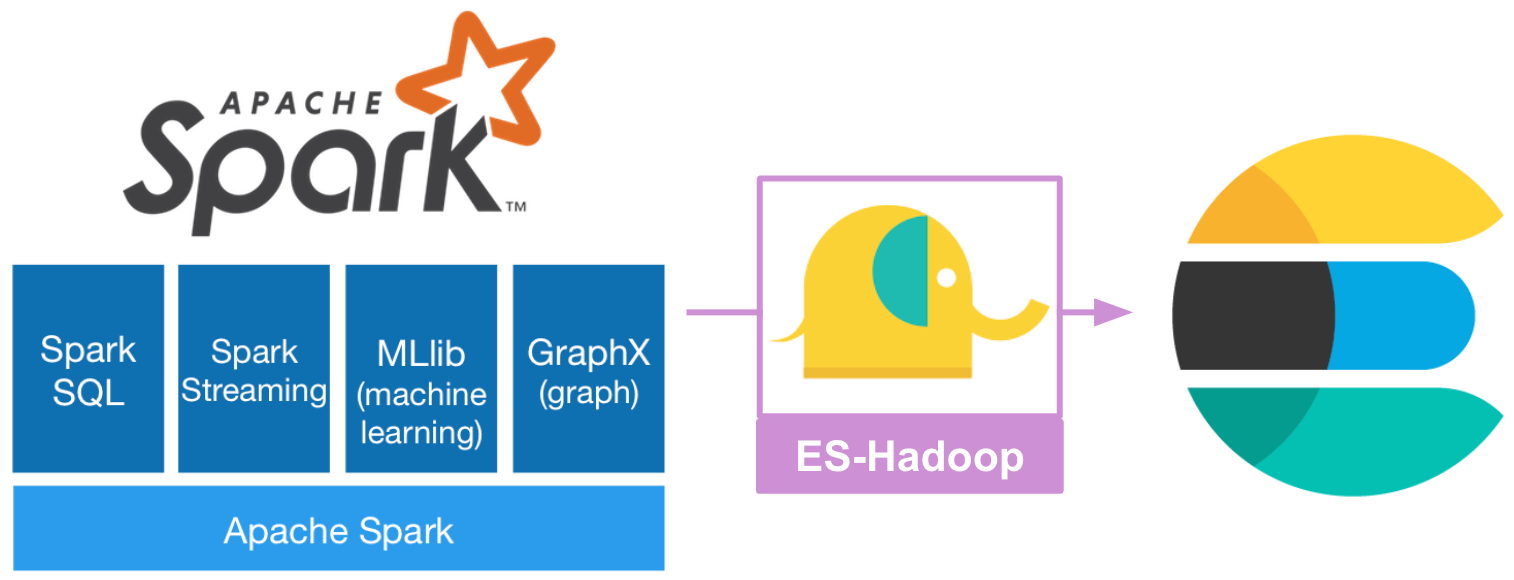

This means you will need to edit the Path environmental variable.ĭo not delete or create Path, as this will mess with a plethora of other softwares you might have installed, and since it gets stored in the Windows Registry, clicking without thinking will have you crying in no time. You now should add the env variables to your Path, if you haven't already. It's a simple surgery: delete the existing bin folder in C:\hadoop-2.7.1 (or wherever you put your Hadoop), and drag and drop the downloaded bin folder in its place.įinally, set your environmental variables: If you don't do this, this is all quite pointless, so please don't forget. I put it on C (don't judge me), and so we can all assume I have a folder like this: C:\hadoop-2.7.1īefore setting the env variable, make sure you replace the entire bin folder with the one you downloaded from the link above. Similar story: just decompress the Hadoop folder in a location you like.

#Install spark on windows jupyter notebook update

If you want to place it elsewhere, or name it differently, that's fine - just make sure you update it in the tutorial as well.

I called my folder spark-2.2.0-bin-hadoop2.7, so let's make the assumption from now on that you have a folder like this: C:\spark-2.2.0-bin-hadoop2.7 Just be grateful I found this out for you, and just remove all spaces :) Since I'm a bad person, I just unpacked it on the main drive and left it there.Īn important note here is that, if you unpack it in a folder that contains spaces, you will get all kinds of nasty bugs one page down the line.

#Install spark on windows jupyter notebook install

You don't really install Spark - you just decompress it in a folder on a location you like.

#Install spark on windows jupyter notebook full

Variable value: C:\Program Files (x86)\scala\binīut wait! Why are we in x86 instead of just the regular (圆4) Program Files folder? No idea, but I can confirm Scala is able to allocate the full memory space on my machine.Once again, you'll have to set the environmental variable for Scala if the installer failed to do so. Now, time for Scala: click on the installer, and then on next until you're done. Obviously you need to replace XXX with the correct folder name - in my case it's jdk1.8.0_151, but it obviously depends on which devkit version you downloaded and installed. Variable value: C:\Program Files\Java\jdkXXX.So go to control panel>system>advanced system settings>environment variables and click on 'new' for the System Variables. Better be a noob and use the GUI like I do: To do this, you can use setx from the command prompt, but mistypes will probably cause a large amount of tears. That's because, unlike in unix-based systems where you can just use export, in Windows you need to manually set your environmental variables. Unfortunately, Java is a harsh mistress - so this probably won't work. After you're done, you should open a command prompt shell (⊞ Win + R and type ' cmd') and check you have succeeded by typing: java -version Once you have all six items, we're ready to roll! Installation Java This is just a windows compiled binary for Hadoop - not needed on Linux, but obviously we're in Windows territory now.

#Install spark on windows jupyter notebook download

Your bucket list starts here! To run Spark on your machine, you'll need: This technical entry covers the pretty involved process of rolling out Spark, Hadoop and Pyspark on a Windows machine.